Table Of Content

- Interrupted Time Series Design

- Pretest-Posttest Design

- Interrupted Time Series

- QUASI-EXPERIMENTAL DESIGNS FOR PROSPECTIVE EVALUTION OF INTERVENTIONS

- What Are the Threats to Establishing Causality When Using Quasi-experimental Designs in Medical Informatics?

- Experimental and quasi-experimental designs in implementation research

Variables a and b should assess similar constructs; that is, the two measures should be affected by similar factors and confounding variables except for the effect of the intervention. Taking our example, variable a could be pharmacy costs and variable b could be the length of stay of patients. If our informatics intervention is aimed at decreasing pharmacy costs, we would expect to observe a decrease in pharmacy costs but not in the average length of stay of patients. However, a number of important confounding variables, such as severity of illness and knowledge of software users, might affect both outcome measures.

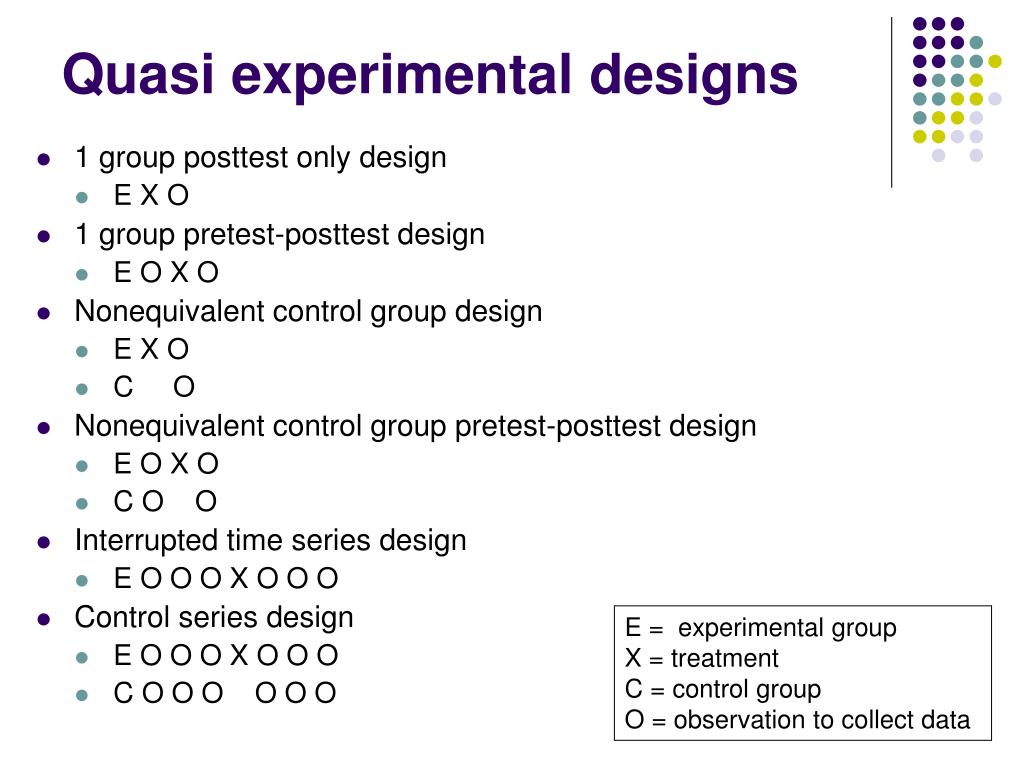

Interrupted Time Series Design

If at the end of the study there was a difference in the two classes’ knowledge of fractions, it might have been caused by the difference between the teaching methods—but it might have been caused by any of these confounding variables. The main advantage of this design is that it controls for potentially different time-varying confounding effects in the intervention group and the comparison group. In our example, measuring points O1 and O2 would allow for the assessment of time-dependent changes in pharmacy costs, e.g., due to differences in experience of residents, preintervention between the intervention and control group, and whether these changes were similar or different. In some implementation science contexts, policy-makers or administrators may not be willing to have a subset of participating patients or sites randomized to a control condition, especially for high-profile or high-urgency clinical issues. Quasi-experimental designs allow implementation scientists to conduct rigorous studies in these contexts, albeit with certain limitations. We briefly review the characteristics of these designs here; other recent review articles are available for the interested reader (e.g. Handley et al., 2018).

Pretest-Posttest Design

For instance, providing public healthcare to one group and withholding it to another in research is unethical. A quasi-experimental design would examine the relationship between these two groups to avoid physical danger. Instead of allocating these patients at random, you uncover pre-existing psychotherapist groups in the hospitals. Clearly, there’ll be counselors who are eager to undertake these trials as well as others who prefer to stick to the old ways. This means that each person has an equivalent chance of being assigned to the experimental group or the control group, depending on whether they are manipulated or not.

Interrupted Time Series

For example, consider a hypothetical RCT that aims to evaluate the implementation of a training program for cognitive behavioral therapy (CBT) in community clinics. Randomizing at the patient level for such a trial would be inappropriate due to the risk of contamination, as providers trained in CBT might reasonably be expected to incorporate CBT principles into their treatment even to patients assigned to the control condition. Randomizing at the provider level would also risk contamination, as providers trained in CBT might discuss this treatment approach with their colleagues. While such clustering minimizes the risk of contamination, it can unfortunately create commensurate problems with confounding, especially for trials with very few sites to randomize. Stratification may be used to at least partially address confounding issues in cluster- randomized and more traditional trials alike, by ensuring that intervention and control groups are broadly similar on certain key variables. Furthermore, such allocation schemes typically require analytic models that account for this clustering and the resulting correlations among error structures (e.g., generalized estimating equations [GEE] or mixed-effects models; Schildcrout et al., 2018).

Create a file for external citation management software

Nonetheless, there are design strategies for non-experimental studies that can be undertaken to improve the internal validity while not eliminating considerations of external validity. There is a relative hierarchy within these categories of study designs, with category D studies being sounder than categories C, B, or A in terms of establishing causality. Thus, if feasible from a design and implementation point of view, investigators should aim to design studies that fall in to the higher rated categories.

However, it doesn't use randomization, the lack of which is a crucial element for quasi-experimental design. A quasi-experimental design allows researchers to take advantage of previously collected data and use it in their study. For instance, it's impractical to trawl through large sample sizes of participants without using a particular attribute to guide your data collection.

Effectiveness of implementing evidence-based approaches to promote physical activity in a Midwestern micropolitan ... - BMC Public Health

Effectiveness of implementing evidence-based approaches to promote physical activity in a Midwestern micropolitan ....

Posted: Fri, 19 Apr 2024 01:43:12 GMT [source]

Zombre et al (52) maintained a large number of control number of sites during the extended study period and were able to look at variations in seasonal trends as well as clinic-level characteristics, such as workforce density and sustainability. In addition to including a control group, several analysis phase strategies can be employed to strengthen causal inference including adjustment for time varying confounders and accounting for auto correlation. It can be useful to obtain pre-test data or baseline characteristics to improve the comparability of the two groups.

Experimental and quasi-experimental designs in implementation research

What factors will affect the effectiveness of using ChatGPT to solve programming problems? A quasi-experimental ... - Nature.com

What factors will affect the effectiveness of using ChatGPT to solve programming problems? A quasi-experimental ....

Posted: Mon, 26 Feb 2024 08:00:00 GMT [source]

The design involved matching clinics by size and an inverse roll-out, to balance out the sizes across the four groups. The inverse roll-out involved four strata of clinics, grouped by size with two clinics in each strata. The roll-out was sequenced across these eight clinics, such that one smaller clinics began earlier, with three clinics of increasing size getting the intervention afterwards.

For example, Li, Raymond, and Peter Bergman explore how algorithm design can improve the quality of interview decisions in the context of professional services hiring. They find that while traditional supervised learning systems — which look for workers who match historical patterns of success in the firm’s training data — select higher-quality workers relative to human hiring, they are also far less likely to select applicants who are Black or Hispanic. In contrast, reinforcement learning and contextual bandit models — which value learning about workers who have not traditionally been represented in the firm’s training data — are able to deliver similar improvements in worker quality while also distributing job opportunities more broadly. Later, the focus shifted to machine learning systems, including “supervised learning” systems trained to make predictions based on large datasets of human-labeled examples. As computational power increased, deep learning algorithms became increasingly successful, leading to an explosion of interest in AI in the 2010s. A set of measurements taken at intervals over a period of time that are interrupted by a treatment.

“Resemblance” is the definition of “quasi.” Individuals are not randomly allocated to conditions or orders of conditions, even though the regression analysis is changed. As a result, quasi-experimental research is research that appears to be experimental but is not. In a classic 1952 article, researcher Hans Eysenck pointed out the shortcomings of the simple pretest-posttest design for evaluating the effectiveness of psychotherapy. The study by Grant et al et al uses a variant of the SWD for which individuals within a setting are enumerated and then randomized to get the intervention.

Therefore, hospital personnel often implement one or more interventions, and if a decline in the rate occurs, they may mistakenly conclude that the decline is causally related to the intervention. This design is employed when it is not ethical or logistically feasible to conduct randomized controlled trials. Researchers typically employ it when evaluating policy or educational interventions, or in medical or therapy scenarios.

An introductory chapter describes the valuable role these types of studies have played in social work, going back to the 1930s, and continuing to the present. Subsequent chapters describe the major features of individual quasi-experimental designs, the types of questions they are capable of answering, and their strengths and limitations. Each discussion of these designs presented in the abstract is subsequently illustrated with descriptions of real examples of their use as published in the social work literature and related fields. By linking the discussion of quasi-experimental designs in the abstract to actualapplications to evaluate the outcomes of social services, the usefulness and vitality of these research methods comes alive to the reader. While this volume could be used as a research textbook, it will also have great value to practitioners seeking a greater conceptual understanding of the quasi-experimental studies they frequently read about in the social work literature.

This design involves studying the effects of an intervention or event that occurs naturally, without the researcher’s intervention. For example, a researcher might study the effects of a new law or policy that affects certain groups of people. Quasi-experimental design is a research method that seeks to evaluate the causal relationships between variables, but without the full control over the independent variable(s) that is available in a true experimental design.

No comments:

Post a Comment